Unsafe action recognition in underground coal mine based on cross-attention mechanism

-

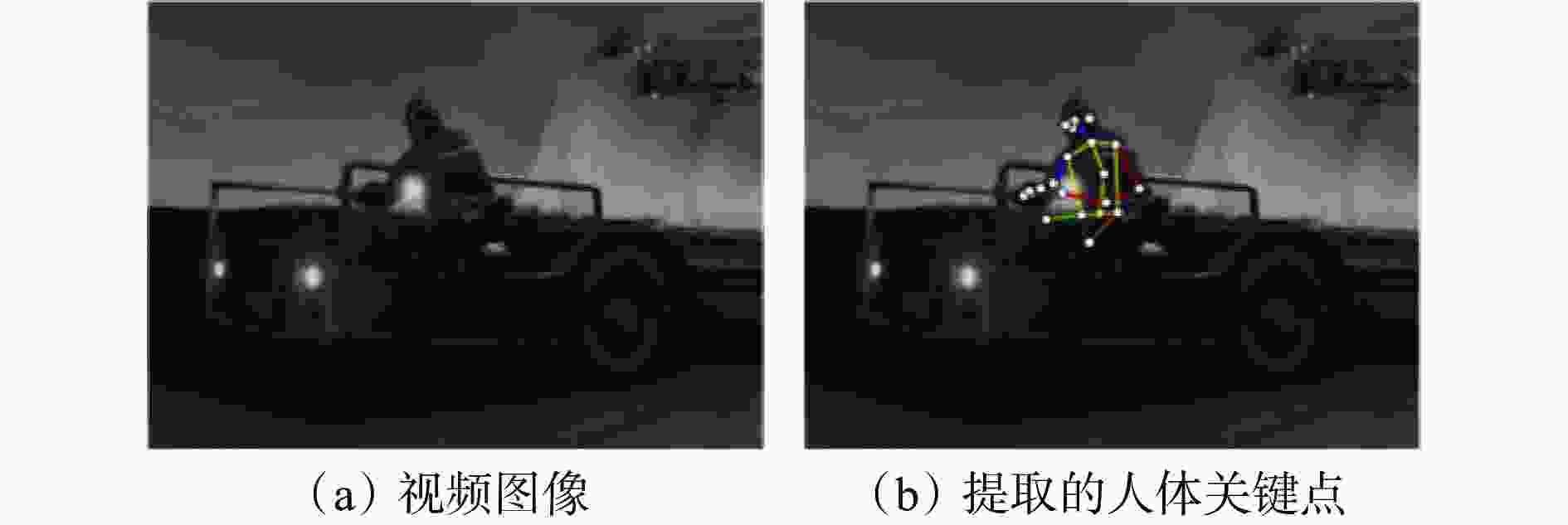

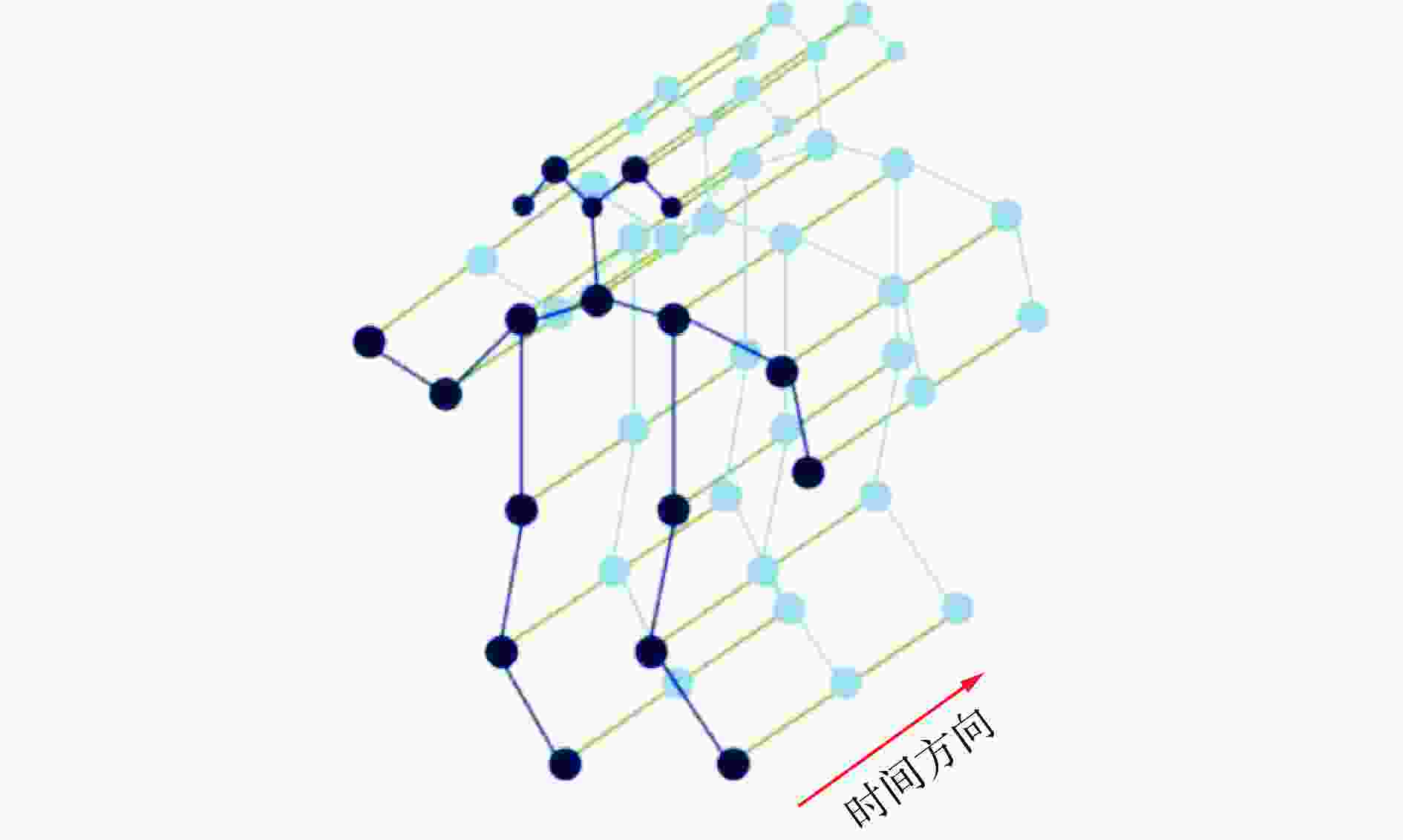

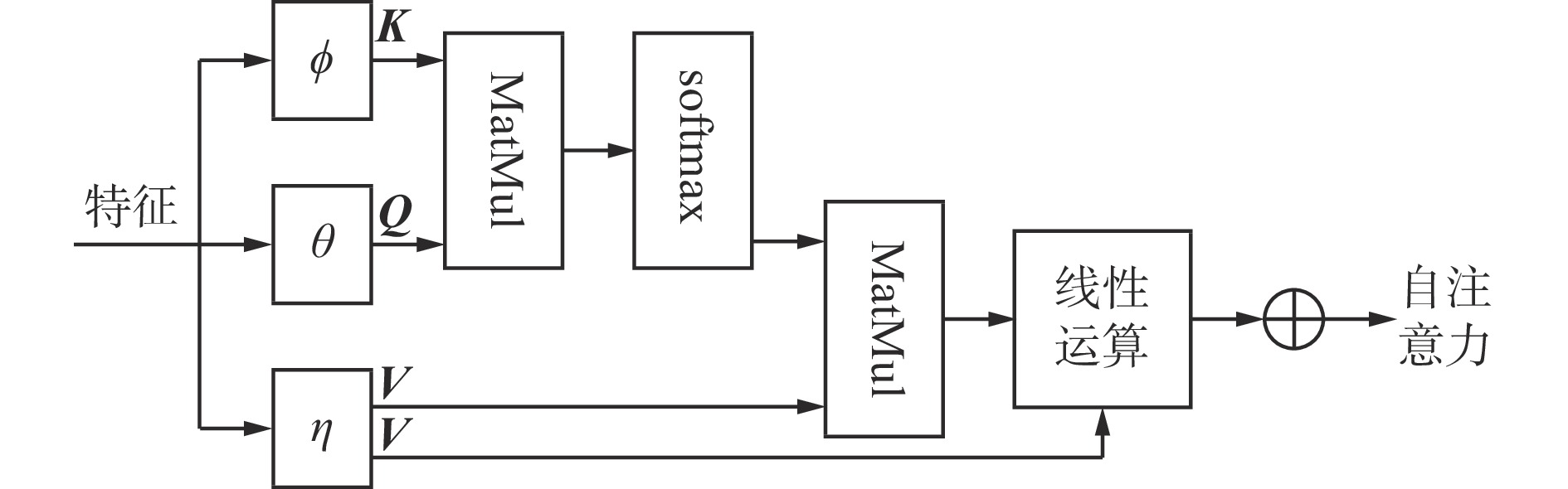

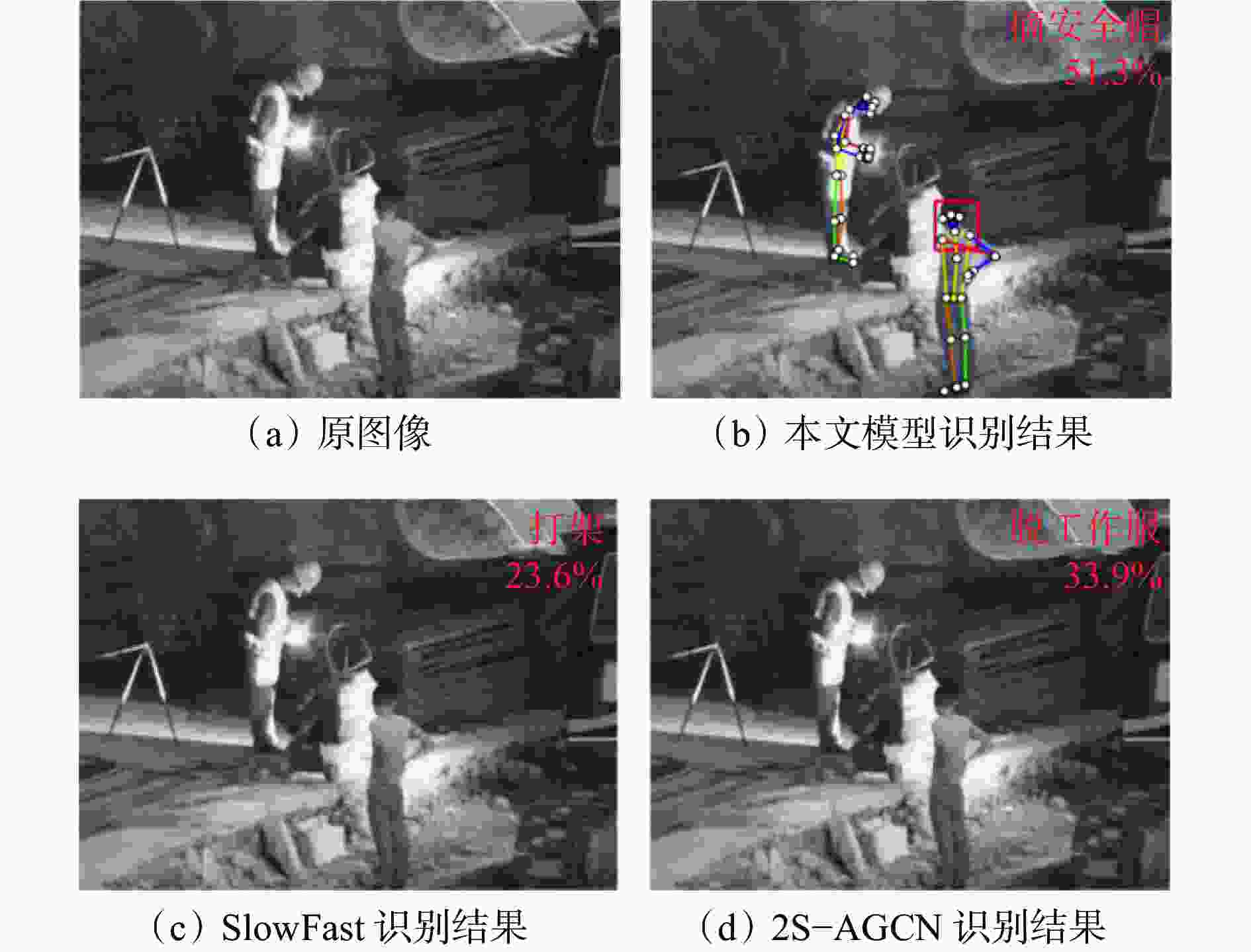

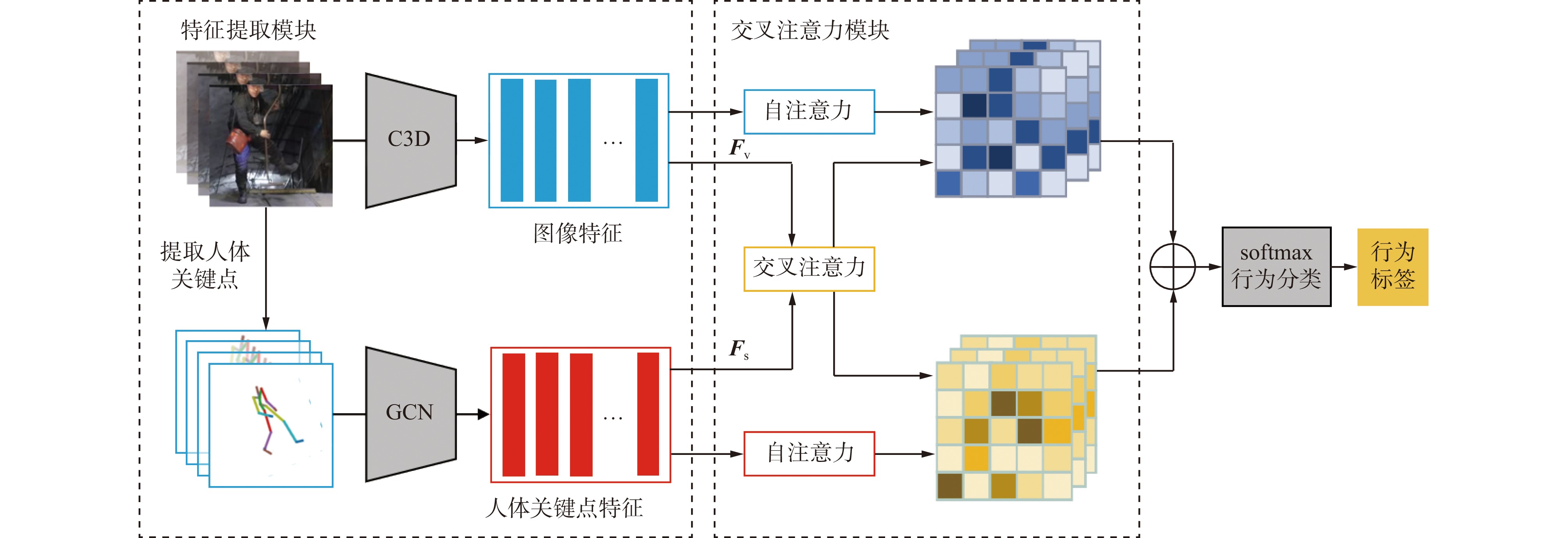

摘要: 对煤矿井下人员不安全行为进行实时视频监控及报警是提升安全生产水平的重要手段。煤矿井下环境复杂,监控视频质量不佳,导致常规基于图像特征或基于人体关键点特征的行为识别方法在煤矿井下应用受限。提出了一种基于交叉注意力机制的多特征融合行为识别模型,用于识别煤矿井下人员不安全行为。针对分段视频图像,采用3D ResNet101模型提取图像特征,采用openpose算法和ST−GCN(时空图卷积网络)提取人体关键点特征;采用交叉注意力机制对图像特征和人体关键点特征进行融合处理,并与经自注意力机制处理后的图像特征和人体关键点特征拼接,得到最终行为识别特征;识别特征经全连接层及归一化指数函数softmax处理后,得到行为识别结果。基于公共数据集HMDB51和UCF101、自建的煤矿井下视频数据集进行行为识别实验,结果表明:采用交叉注意力机制可使行为识别模型更有效地融合图像特征和人体关键点特征,大幅提高识别准确率;与目前应用最广泛的行为识别模型SlowFast相比,基于交叉注意力机制的多特征融合行为识别模型在HMDB51和UCF101数据集上的识别准确率分别提高1.8%,0.9%,在自建数据集上的识别准确率提高6.7%,验证了基于交叉注意力机制的多特征融合行为识别模型更适用于煤矿井下复杂环境中人员不安全行为识别。Abstract: The real-time video monitoring and alarming of unsafe actions of coal mine personnel is an important means to improve the level of safety in production. The coal mine underground environment is complex, and the monitoring video quality is poor. The conventional action recognition method based on image features or human body key point features is limited in application in the underground coal mine. An action recognition model of multi-feature fusion based on cross-attention mechanism is proposed to recognize unsafe actions of coal mine personnel. For segment video images, the 3D ResNet101 model is adopted to extract image features. The openpose algorithm and ST-GCN (space-time graph convolutional network) are adopted to extract human body key point features. The cross-attention mechanism is used to fuse the image features and human key point features. The fused features are spliced respectively with the image features or human key point features processed by the self-attention mechanism to obtain the final action recognition features. The recognition features is processed by the fully connected layer and the normalized exponential function softmax to obtain action recognition result. Based on the public data sets HMDB51 and UCF101, and the self-built coal mine video dataset, the action recognition experiment is carried out. The results show that the cross-attention mechanism can make the action recognition model more effective in fusing image features and human key point features, and greatly improve the recognition accuracy. At present, SlowFast is the most widely used action recognition model. Compared with the SlowFast, the recognition accuracy of the action recognition model of multi-feature fusion based on cross-attention mechanism has been improved by 1.8% and 0.9% for HMDB51 and UCF101 datasets respectively. The recognition accuracy on the self-built dataset has increased by 6.7%. It is verified that the action recognition model of multi-feature fusion based on cross-attention mechanism is more suitable for the recognition of unsafe actions in the complex coal mine environment.

-

表 1 不同行为识别模型在公共数据集上的对比实验结果

Table 1. Comparison experiment results of different action recognition models by use of public data sets

% 模型 准确率 HMDB51 UCF101 C3D 56.8 82.3 3D ResNet101 61.7 88.9 TSN 68.5 93.4 SlowFast 72.3 95.8 ST−GCN 48.6 78.3 2S−AGCN 51.8 80.2 本文模型 74.1 96.7 表 2 消融实验结果

Table 2. Ablation experiment results

% 图像特征

提取网络人体关键点特

征提取网络自注意力

机制交叉注意力

机制准确率 HMDB51 UCF101 √ × × × 61.7 88.9 √ × √ × 63.3 89.7 × √ × × 48.6 78.3 × √ √ × 51.8 81.4 √ √ × × 63.2 88.5 √ √ √ × 69.0 92.7 √ √ √ √ 74.1 96.7 表 3 不同行为识别模型在自建数据集上的对比实验结果

Table 3. Comparison experiment results of different action recognition models by use of built underground video data sets

% 模型 准确率 模型 准确率 C3D 75.4 ST−GCN 63.4 3D ResNet101 78.7 2S−AGCN 70.9 TSN 81.5 本文模型 91.3 SlowFast 84.6 表 4 本文模型对不同行为类别的识别结果

Table 4. Action recognition results of different action types by the proposed model

% 行为类别 准确率 行为类别 准确率 抽烟 93.7 跌倒 95.8 打架 91.5 摘安全帽 84.2 徘徊 93.2 脱工作服 89.4 -

[1] 党伟超,史云龙,白尚旺,等. 基于条件变分自编码器的井下配电室巡检行为检测[J]. 工矿自动化,2021,47(12):98-105. doi: 10.13272/j.issn.1671-251x.2021030087DANG Weichao,SHI Yunlong,BAI Shangwang,et al. Inspection behavior detection of underground power distribution room based on conditional variational auto-encoder[J]. Industry and Mine Automation,2021,47(12):98-105. doi: 10.13272/j.issn.1671-251x.2021030087 [2] 王国法,任怀伟,赵国瑞,等. 煤矿智能化十大“痛点”解析及对策[J]. 工矿自动化,2021,47(6):1-11. doi: 10.13272/j.issn.1671-251x.17808WANG Guofa,REN Huaiwei,ZHAO Guorui,et al. Analysis and countermeasures of ten 'pain points' of intelligent coal mine[J]. Industry and Mine Automation,2021,47(6):1-11. doi: 10.13272/j.issn.1671-251x.17808 [3] SIMONYAN K, ZISSERMAN A. Two-streamconvolutional networks for action recognition in videos[Z]. arXiv Preprint, arXiv:1406.2199v2. [4] WANG Limin, XIONG Yuanjun, WANG Zhe, et al. Temporal segment networks: towards good practices for deep action recognition[C]. European Conference on Computer Vision, Amsterdam, 2016: 20-36. [5] JI Lin, GAN Chuang, HAN Song. TSM: temporal shift module for efficient video understanding[C]. The IEEE Conference on Computer Vision and Pattern Recognition, Long Beach, 2019: 7083-7093. [6] LIU Kun, LIU Wu, GAN Chuang, et al. T-C3D: temporal convolutional 3D network for real-time action recognition[C]. The AAAI Conference on Artificial Intelligence, New Orleans, 2018: 7138-7145. [7] FEICHTENHOFER C, FAN H, MALIK J, et al. Slowfast networks for video recognition[C]. The IEEE International Conference on Computer Vision, Long Beach, 2019: 6202-6211. [8] 党伟超,张泽杰,白尚旺,等. 基于改进双流法的井下配电室巡检行为识别[J]. 工矿自动化,2020,46(4):75-80. doi: 10.13272/j.issn.1671-251x.2019080074DANG Weichao,ZHANG Zejie,BAI Shangwang,et al. Inspection behavior recognition of underground power distribution room based on improved two-stream CNN method[J]. Industry and Mine Automation,2020,46(4):75-80. doi: 10.13272/j.issn.1671-251x.2019080074 [9] 刘浩,刘海滨,孙宇,等. 煤矿井下员工不安全行为智能识别系统[J]. 煤炭学报,2021,46(增刊2):1159-1169. doi: 10.13225/j.cnki.jccs.2021.0670LIU Hao,LIU Haibin,SUN Yu,et al. Intelligent recognition system of unsafe behavior of underground coal miners[J]. Journal of China Coal Society,2021,46(S2):1159-1169. doi: 10.13225/j.cnki.jccs.2021.0670 [10] 张立亚. 基于图像识别的煤矿井下安全管控技术[J]. 煤矿安全,2021,52(2):165-168. doi: 10.13347/j.cnki.mkaq.2021.02.032ZHANG Liya. Safety control technology of coal mine based on image recognition[J]. Safety in Coal Mines,2021,52(2):165-168. doi: 10.13347/j.cnki.mkaq.2021.02.032 [11] YAN Sijie, XIONG Yuanjun, LIN Dahua. Spatial temporal graph convolutional networks for skeleton-based action recognition[C]. The AAAI Conference on Artificial Intelligence, New Orleans, 2018: 7444-7452. [12] SHI Lei, ZHANG Yifan, CHENG Jian, et al. Two-stream adaptive graph convolutional networks for skeleton-based action recognition[C]. The IEEE Conference on Computer Vision and Pattern Recognition, Long Beach, 2019: 12026-12035. [13] 黄瀚,程小舟,云霄,等. 基于DA−GCN的煤矿人员行为识别方法[J]. 工矿自动化,2021,47(4):62-66. doi: 10.13272/j.issn.1671-251x.17721HUANG Han,CHENG Xiaozhou,YUN Xiao,et al. DA-GCN-based coal mine personnel action recognition method[J]. Industry and Mine Automation,2021,47(4):62-66. doi: 10.13272/j.issn.1671-251x.17721 [14] 王璇,吴佳奇,阳康,等. 煤矿井下人体姿态检测方法[J]. 工矿自动化,2022,48(5):79-84. doi: 10.13272/j.issn.1671-251x.17867WANG Xuan,WU Jiaqi,YANG Kang,et al. Human posture detection method in coal mine[J]. Journal of Mine Automation,2022,48(5):79-84. doi: 10.13272/j.issn.1671-251x.17867 [15] HARA K, KATAOKA H, SATOH Y. Can spatiotemporal 3D CNNs retrace the history of 2D CNNs and imagenet[C]. The IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, 2018: 6546-6555. [16] CAO Zhe, SIMON T, WEI S-E, et al. Realtime multi-person 2D pose estimation using part affinity fields[C]. The IEEE International Conference on Computer Vision, Honolulu, 2017: 7291-7299. [17] WANG Xiaolong, GIRSHICK R, GUPTA A, et al. Non-local neural networks[C]. The IEEE International Conference on Computer Vision, Salt Lake City, 2018: 7794-7803. [18] VELICKOVIC P, CUCURULL G, CASANOVA A, et al. Graph attention networks[Z]. arXiv Preprint, arXiv: 1710.10903. [19] KUEHNE H, JHUANG H, GARROTE E, et al. HMDB: a large video database for human motion recognition[C]. International Conference on Computer Vision, Barcelona, 2011: 2556-2563. [20] SOOMORO K, ZAMIR A R, SHAH M. UCF101: a dataset of 101 human actions classes from videos in the wild[Z]. arXiv Preprint, arXiv: 1212.0402. -

下载:

下载: